I hated the data contracts fad

Ah, data contracts. That conversation explosion was a nauseating time to be on LinkedIn. If you’re not as Online as I am, you may not have been aware of the bizarre time in the Data Internet when data contracts were all anyone ever fucking talked about. If you do care to know about the discourse, I will direct you to the ever-astute Benn Stancil’s post on the matter, as he’s often a good historian with these things.

I think what frustrated me about the whole period that data contracts were taking the internet by storm was my usual beef with Data Influencers(TM). That usual beef is a lack of clarity, word salad posting, and stuffing posts with absolutely as much jargon as humanly fucking possible, and for what, exactly? To seem smart? To be a Thought Leader in The Discourse? To build an audience online that might eventually convert into customers for your SaaS tool that isn’t like the other SaaS tools, it’s a cool one and actually gives Biznis Valyou, no I mean it? (I will obligatorily say here that I make no judgments against anyone’s Cool SaaS tool, but please, you have to understand why I feel a bit cynical about how so much of this feels cash-grabby.)

We need more himbos in the data world, and in my case, fembos (Classical definition: Kind, Beefy, Stupid. My definition: Kind, Beefy, Aware of Own Knowledge Limits, Willing to Call Self Dummy) . This has always been the ethos behind my little “for dummies” series. I am not being self-deprecating with the tagline. I know I possess a reasonable amount of intelligence. I consider myself a pretty good writer. If you are seriously asking me, “Faith, do you consider yourself dumb?” The answer is no. I know I’m not dumb.

But I think it’s absolutely fucking poisonous to your social and professional life to prize seeming smart or achieving intelligence. The reality of the world and especially of data as a career is that you will never be done learning. Technology will continue to evolve. Business needs will always be changing. Software you love will release updates faster than you can keep up.

So why not be a little bit silly about it and accept that learning and growing are continuous processes and you’re never done? Why not surrender a little bit to the world’s furious pace of change and call yourself a dummy in the face of it? You don’t actually have to believe yourself to be stupid—I do not believe that about myself. But when I call myself a dummy or write a for dummies post, it is an acknowledgment that the most fruitful professionals I have ever worked with are cognizant of the boundaries of their knowledge, and are constantly hungry for more of it.

So yeah. The Data Contracts Content Explosion on the Data Internet was fucking exhausting. Unfortunately, it was on to an important idea.

The data contracts fad had a point

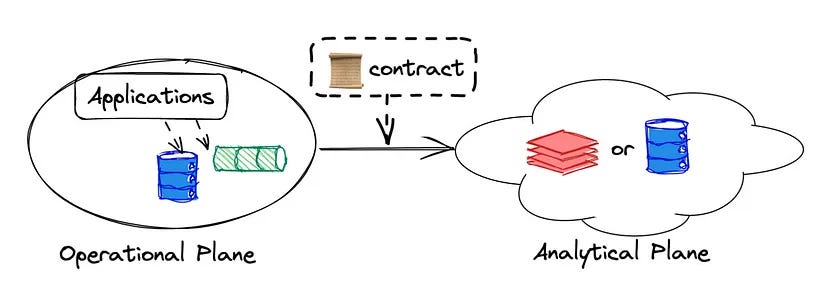

(credit to the fine folks at DataHub for this visualization)

Look, working in data is complicated. Most of the time, as a data person, you speak in terms of sources (apps that collect and produce data, like Salesforce, Hubspot, Google Sheets, that type of thing) and exposures (places where your data gets analyzed or displayed for business stakeholders. Maybe Looker, Hex, Google Sheets, etc).

You have to hook up a pipeline between your sources and exposures. Usually sources don’t give you data in a great format. So in a pipeline, you have to shape the data up into a table that is actually useful, and put it in the tool that will do the graphing or display. It might have some calculations and cleaning in there too. Sometimes though, those pipelines can break. They can break for many reasons, but one of those reasons could be because one of your sources changed.

Sources might change for a variety of reasons. Maybe Salesforce had a field called customer_physical_address and they decided to start calling it customer_address instead. Maybe Hubspot started collecting a really useful field called this_customer_will_spend_a_million_dollars and your pipeline just isn’t picking it up at all! There’s good reasons and bad reasons for sources to change, but they can change. When they do, your downstream exposures will often get fucked up. This looks like numbers getting calculated wrong, or data just plain not displaying in your dashboards because your dashboards were expecting a certain column name and now they don’t have it. Data breakages are a fresh hell every time.

As a data practitioner, these types of problems are a real bitch to solve. It’s hard to find the root cause of why a dashboard looks wrong when there are so many different reasons a dashboard could be wrong. It’s like treating headaches or nausea—you could be staring at your screen for too long all day, or you could have a life-threatening illness that’s gonna kill you in 18 months. Trust me, I’ve seen both cases.

Wouldn’t it be helpful if you, the data practitioner managing dashboards and responsible to irate business stakeholders who want to know why their revenue numbers don’t look accurate, had some kind of guarantee that these changes in the source system wouldn’t fuck up your shit?

Well, the reality is, you can’t really have that guarantee. Not with the way a lot of technology now is, anyhow. One way or another, breaking changes like this are gonna involve some amount of fix-it work. But, wouldn’t it be cool if you could have forewarning about a breaking change before it happened? What if a breaking change was on its way to a table that you lovingly built that your C-Suite team looks at every day? What if you knew about that breaking change because this table just did not build at all and you told your C-Suite guys “Hey! Pipeline down! Bear with me while I fix it!” Instead of them being like “WHY NUMBERS WEIRD!!!”

My darling reader, if that sounds like it would be helpful, you can have that. It’s not going to solve all your data problems. But maybe it will make your life a bit easier. Let me tell you about dbt’s model contracts feature.

call me a model with all the contracts I got

(I have to give it up to Winnie for the above joke, click here for the original)

Let’s imagine a scenario where you are in charge of a high-impact dashboard. This dashboard is mostly fueled by a model in your dbt project called fct_revenue. This is an extremely well-crafted model with the below YAML specs.

models:

- name: fct_revenue

config:

contract:

enforced: true

columns:

- name: customer_id

data_type: int

constraints:

- type: not_null

- name: customer_name

data_type: string

- name: revenue

data_type: float

- name: is_big_spender

data_type: bool

- name: lifetime_value

data_type: int

Your C-Suite guys look at this model every day. And because you know about dbt’s model contracts, you have an early alarm bell if any of your column names or data types in your columns change. You set up this early alarm bell by setting the contract config enforced to true, in lines 3-5 of your YAML file.

Because you did this, dbt is going to run a "preflight" check to ensure that fct_revenue’s query will return a set of columns with names and data types matching the ones you have defined. This check is agnostic to the order of columns specified in your model (SQL) or YAML spec. (This is copied directly from dbt’s documentation on model contracts).

If that preflight check does not pass, then fct_revenue is not going to get built. You have fct_revenue in a nightly refresh job. There’s also a rotating PagerDuty schedule to assign someone to address job failures.

If any changes to column names or data types happen to fct_revenue, your refresh job is going to fail. Then, one of your studious data teammates is going to fix the issue and proactively inform the C-Suite guys of the problem, instead of one of them informing you of the issue.

I think this is a much more preferable workflow to the data team being reactive to pipeline issues. It’s an extremely simple config, with simple-to-understand consequences.

If you enable model contracts on one of your models and you want to see how it works in your dev environment, try changing the data type of one of your columns to something you know it isn’t. In the example above, I’d change customer_name’s data type to int, and then do a dbt build on the model. It’ll throw me an error, fail to build and explain that my model violated its contract. Easy to config, easy to understand, and easy to validate. Not too bad, right?

Anyways. You’re welcome for this week’s outpouring of Faith Is Too Easily Annoyed By Data Influencers And Also Thinks dbt Model Contracts Are Cool. Tell your friends, don’t forget to subscribe. See you next week.